Category: Software

FOSDEM 2013

This year, I was given the opportunity to go to Brussels to attend the best Free Software and Open Source event in Europe, also known as The Free and Open source Software Developers’ European Meeting (FOSDEM). It is one of the biggest open source conferences in Europe; it is a place where the developers of many open projects from around the world come together to have a beer and to talk about their progress. Over 5000 visitors come to Brussels every year to see hundreds of talks organised to dozens of tracks. It was actually my first time at an even this big. And it was great, indeed!

The main reason for our trip to Brussels was to present our project called LNST in the Test and Automation Devroom. Unfortunately, due to the travel arrangements, we couldn’t make it to the second day of the conference. Nevertheless it was a great experience. Saturday was crammed with great talks and they all seemed to happen at the same time! Hopefully, the organisers managed to record most of them and will make them available online soon.

Friday’s Beer Event

The conference actually started on February 1st by the beer event in probably the largest pub I have ever seen — the Delirium Café. It had at least 3 floors, all of which were full of geeks including a part of the street in front of the pub. There was a really large variety of beers to chose from with alcohol content going up to (and sometimes probably even over) 10%. I personally liked Belgian beer. It is different from that we are used to in Czech Republic, but in a good way.

Delirium Café in Brussels (src: http://deliriumcafe.be)

Saturday’s Talks

The official event was held at the Université Libre de Bruxelles (or ULB) campus which is situated in southern Brussels. The first talks on Saturday started mostly from 11:00, so there was some time to get yourself together if you had overdone it with the beer the previous day. The first thing we did was actually to get a coffee and a croissant. There was an improvised bar conveniently at the campus, which was really nice.

The first presentation we decided to see was about the architecture and the recent developments of GNU/Hurd from Samuel Thibault in the Microkernels and Component-based OS devroom. Samuel explained that Hurd is designed to provide as much freedom to regular users as possible as the root has (accessing hardware being the notable exception). For example, Hurd allows users to build and use their own TCP/IP stack. Due to the modularity of the kernel, you can replace the majority of the OS without even asking.

Then we decided to move to the Virtualisation track to see a talk about virtual networking. We got there late, and I must admit, that I really didn’t understand the concept. On the other hand, what was really nice were the stands in building K and AW. They were usually overflowing with swag to take (or buy). Red Hat had a Fedora booth there with Jaroslav Řezník and Jiří Eischmann, giving out DVDs with Fedora 18 (apart from other things). I got the opportunity to try the new Firefox OS there. It looked okay, but only one of the four buttons the phone actually worked. I am sure it will do better next year :-).

Željko Filipin talked from 13:30 in the Testing and Automation Devroom about the way Wikipedia is tested, which was really interesting. They use a set of tools called Selenium to automate web browsers. It provides Ruby API to manipulate all the major browsers. This is very helpful during the testing process, as it allows scripting unit and regression tests easily.

My talk about the Linux Network Stack Project was scheduled right after that. I was really nervous about it, because it was my first serious public speaking (and in English). I made it through without passing out, so I guess it went well. Still, I am not sure whether I made everything as clear as I possibly could. The devroom was very well organised (or at least that was the impression I got when I entered) thanks to R. Tyler Croy and others who were running it.

Slides: You can download the slides I used for the LNST presentation here.

The last two talks I saw there were about the Linux kernel, both were part of the Operating Systems main track. In the first one, Thomas Petazzoni from Free Electrons explained the challenges of ARM support in the kernel and described how Linux deals with supporting different ARM-based SoC’s. The very last talk we attended was from the maintainer of I2C subsystem in the Linux kernel — Wolfgang Sang. Wolfgang was talking about what it takes to be a kernel subsystem maintainer. He explained how a person becomes one and then focused mainly on his own experiences and how he prefers to do it.

Apparently, RMS was there attending the conference, but unfortunately I didn’t see him. $*#.! Well, maybe next time.

Brussels

There was no much time left, but we managed to get a while in the evening to go buy some chocolate and have a look through the city. To me, Brussels is something in the middle between Prague and London. They write everything in two languages — French and Dutch, but they seem to use French a lot more. If you are hungry there, you can get a variety of things from Thai to Spanish, Italian, and Mexican food. If you don’t know, where to go, I can recommend a great tex-mex restaurant called ChiChi’s. As always, you can get a whole lot of junk food from McDonald’s. There are a couple of instances of Starbucks in the city and an infinite number of other cafés to maintain proper caffeine levels :-).

Anyway, it was both a great conference and a really nice trip. I hope, I will be able to come next year as well!

If you liked this post, make sure you subscribe to receive notifications about new content on this site by email or a RSS feed.

Alternatively, feel free to follow me on Twitter or Google+.

Brief GDB Basics

In this post I would like to go through some of the very basic cases in which gdb can come in handy. I’ve seen people avoid using gdb, saying it is a CLI tool and therefore it would be hard to use. Instead, they opted for this:

std::cout << "qwewtrer" << std::endl;

DEBUG("stupid segfault already?");

That’s just stupid. In fact, printing a back trace in gdb is as easy as writing two letters. I don’t appreciate lengthy debugging sessions that much either, but it’s something you simply cannot avoid in software development. What you can do to speed things up is to know the right tools and to be able to use them efficiently. One of them is GNU debugger.

Example program

All the examples in the text will be referring to the following short piece of code. I have it stored as segfault.c and it’s basically a program that calls a function which results in segmentation fault. The code looks like this:

/* Just a segfault within a function. */

#include <stdio.h>

#include <unistd.h>

void segfault(void)

{

int *null = NULL;

*null = 0;

}

int main(void)

{

printf("PID: %d\n", getpid());

fflush(stdout);

segfault();

return 0;

}

Debugging symbols

One more thing, before we proceed to gdb itself. Well, two actually. In order to get anything more than a bunch of hex addresses you need to compile your binary without stripping symbols and with debug info included. Let me explain.

Symbols (in this case) can be thought of simply variable and function names. You can strip them from your binary either during compilation/linking (by passing -s argument to gcc) or later with strip(1) utility from binutils. People do this, because it can significantly reduce size of the resulting object file. Let’s see how it works exactly. First, compile the code with striping the symbols:

[astro@desktop ~/MyBook/code]$ gcc -s segfault.c

Now let’s fire up gdb:

[astro@desktop ~/MyBook/code]$ gdb ./a.out GNU gdb (GDB) Fedora (7.3.1-48.fc15) Reading symbols from /mnt/MyBook/code/a.out...(no debugging symbols found)...done.

Notice the last line of the output. gdb is complaining that it didn’t find any debuging symbols. Now, let’s try to run the program and display stack trace after it crashes:

(gdb) run

Starting program: /mnt/MyBook/code/a.out

PID: 21568

Program received signal SIGSEGV, Segmentation fault.

0x08048454 in ?? ()

(gdb) bt

#0 0x08048454 in ?? ()

#1 0x0804848d in ?? ()

#2 0x4ee4a3f3 in __libc_start_main (main=0x804845c, argc=1, ubp_av=0xbffff1a4, init=0x80484a0, fini=0x8048510,

rtld_fini=0x4ee1dfc0 , stack_end=0xbffff19c) at libc-start.c:226

#3 0x080483b1 in ?? ()

You can imagine, that this won’t help you very much with the debugging. Now let’s see what happens when the code is compiled with symbols, but without the debuginfo.

[astro@desktop ~/MyBook/code]$ gcc segfault.c [astro@desktop ~/MyBook/code]$ gdb ./a.out GNU gdb (GDB) Fedora (7.3.1-48.fc15) Reading symbols from /mnt/MyBook/code/a.out...(no debugging symbols found)...done. (gdb) run Starting program: /mnt/MyBook/code/a.out PID: 21765 Program received signal SIGSEGV, Segmentation fault. 0x08048454 in segfault () (gdb) bt #0 0x08048454 in segfault () #1 0x0804848d in main ()

As you can see, gdb still complains about the symbols in the beginning, but the results are much better. The program crashed when it was executing segfault() function, so we can start looking for any problems from there. Now let’s see what we get when debuginfo get’s compiled in.

[astro@desktop ~/MyBook/code]$ gcc -g segfault.c [astro@desktop ~/MyBook/code]$ gdb ./a.out GNU gdb (GDB) Fedora (7.3.1-48.fc15) Reading symbols from /mnt/MyBook/code/a.out...done. (gdb) run Starting program: /mnt/MyBook/code/a.out PID: 21934 Program received signal SIGSEGV, Segmentation fault. 0x08048454 in segfault () at segfault.c:9 9 *null = 0;

That’s more like it! gdb printed the exact line from the code that caused the program to crash! That means, every time you try to use gdb to get some useful directions for debugging, make sure, that you don’t strip symbols and have debuginfo available!

Start, Stop, Interrupt, Continue

These are the basic commands to control your application’s runtime. You can start a program by writing

(gdb) run

When a program is running, you can interrupt it with the usual Ctrl-C, which will send SIGINTR to the debugged process. When the process is interrupted, you can examine it (this is described later in the post) and then either stop it completely or let it continue. To stop the execution, write

(gdb) kill

If you’d like to let your program carry on executing, use

(gdb) continue

I should point out, that in gdb, you can abbreviate most of the commands to as little as a single character. For instance r can be used for run, k for kill, c for continue and so on :).

Stack traces

Stack traces are very powerful when you need to localize the point of failure. Seeing a stack trace will point you directly to the function, that caused you program to crash. If your project is small or you keep your functions short and straight-forward, this could be all you’ll ever need from a debugger. You can display stack trace in case of a segmentation fault or generally anytime when the program is interrupted. The stack trace can be displayed by a backtrace or bt command

(gdb) bt #0 0x08048454 in segfault () at segfault.c:9 #1 0x0804848d in main () at segfault.c:17

You see, that the program stopped (more precisely was killed by the kernel with a SIGSEGV signal) at line 9 of segfault.c file while it was executing a function segfault(). The segfault function was called directly from the main() function.

Listing source code

When the program is interrupted (and compiled it with debuginfo), you can list the code directly by using the list command. It will show the precise line of code (with some context) where the program was interrupted. This can be more convenient, because you don’t have to go back into your editor and search for the place of the crash by line numbers.

(gdb) list

4 #include

5

6 void segfault(void)

7 {

8 int *null = NULL;

9 *null = 0;

10 }

11

12 int main(void)

13 {

We know (from the stack trace), that the program has stopped at line 9. This command will show you exactly what is going on around there.

Breakpoints

Up to this point, we only interrupted the program by sending a SIGTERM to it manually. This is not very useful in practice though. In most cases, you will want the program stop at some exact place during the execution, to be able to inspect what is going on, what values do the variables have and possibly to manually step further through the program. To achieve this, you can use breakpoints. By attaching a breakpoint to a line of code, you say that you want the debugger to interrupt every time the program wants to execute the particular line and wait for your instructions.

A breakpoint can be set by a break command (before the program is executed) like this

(gdb) break 8 Breakpoint 2 at 0x4005c0: file segfault.c, line 8.

I’m using line number to specify, where to put the break, but you can use also function name and file name. There are multiple variants of arguments to break command.

You can list the breakpoints you have set up by writing info breakpoints:

(gdb) info breakpoints Num Type Disp Enb Address What 1 breakpoint keep n 0x00000000004005d8 in main at segfault.c:14 2 breakpoint keep y 0x00000000004005c0 in segfault at segfault.c:8

To disable a break point, use disable <Num> command with the number you find in the info.

Stepping through the code

When gdb stops your application, you can resume the execution manually step-by-step through the instructions. There are several commands to help you with that. You can use the step and next commands to advance to the following line of code. However, these two commands are not entirely the same. Next will ‘jump’ over function calls and run them at once. Step, on the other hand, will allow you to descend into the function and execute it line-by-line as well. When you decide you’ve had enough of stepping, use the continue command to resume the execution uninterrupted to the next break point.

Breakpoint 1, segfault () at segfault.c:8 8 int *null = NULL; (gdb) step 9 *null = 0;

There are multiple things you can do during the process of stepping through a running program. You can dump values of variables using the print command, even set values to variables (using set command). And this is definitely not all. Gdb is great! It really can save a lot of time and lets you focus on the important parts of software development. Think of it the next time you try to bisect errors in the program by inappropriate debug messages :-).

Sources

Magical container_of() Macro

When you begin with the kernel, and you start to look around and read the code, you will eventually come across this magical preprocessor construct. What does it do? Well, precisely what its name indicates. It takes three arguments — a pointer, type of the container, and the name of the member the pointer refers to. The macro will then expand to a new address pointing to the container which accommodates the respective member. It is indeed a particularly clever macro, but how the hell can this possibly work? Let me illustrate …

The first diagram illustrates the principle of the container_of(ptr, type, member) macro for who might find the above description too clumsy.

Bellow is the actual implementation of the macro from Linux Kernel:

#define container_of(ptr, type, member) ({ \

const typeof( ((type *)0)->member ) *__mptr = (ptr); \

(type *)( (char *)__mptr - offsetof(type,member) );})

At first glance, this might look like a whole lot of magic, but it isn’t quite so. Let’s take it step by step.

Statements in Expressions

The first thing to gain your attention might be the structure of the whole expression. The statement should return a pointer, right? But there is just some kind of weird ({}) block with two statements in it. This in fact is a GNU extension to C language called braced-group within expression. The compiler will evaluate the whole block and use the value of the last statement contained in the block. Take for instance the following code. It will print 5.

int x = ({1; 2;}) + 3;

printf("%d\n", x);

typeof()

This is a non-standard GNU C extension. It takes one argument and returns its type. Its exact semantics is throughly described in gcc documentation.

int x = 5;

typeof(x) y = 6;

printf("%d %d\n", x, y);

Zero Pointer Dereference

But what about the zero pointer dereference? Well, it’s a little pointer magic to get the type of the member. It won’t crash, because the expression itself will never be evaluated. All the compiler cares for is its type. The same situation occurs in case we ask back for the address. The compiler again doesn’t care for the value, it will simply add the offset of the member to the address of the structure, in this particular case 0, and return the new address.

struct s {

char m1;

char m2;

};

/* This will print 1 */

printf("%d\n", &((struct s*)0)->m2);

Also note that the following two definitions are equivalent:

typeof(((struct s *)0)->m2) c; char c;

offsetof(st, m)

This macro will return a byte offset of a member to the beginning of the structure. It is even part of the standard library (available in stddef.h). Not in the kernel space though, as the standard C library is not present there. It is a little bit of the same 0 pointer dereference magic as we saw earlier and to avoid that modern compilers usually offer a built-in function, that implements that. Here is the messy version (from the kernel):

#define offsetof(TYPE, MEMBER) ((size_t) &((TYPE *)0)->MEMBER)

It returns an address of a member called MEMBER of a structure of type TYPE that is stored in memory from address 0 (which happens to be the offset we’re looking for).

Putting It All Together

#define container_of(ptr, type, member) ({ \

const typeof( ((type *)0)->member ) *__mptr = (ptr); \

(type *)( (char *)__mptr - offsetof(type,member) );})

When you look more closely at the original definition from the beginning of this post, you will start wondering if the first line is really good for anything. You will be right. The first line is not intrinsically important for the result of the macro, but it is there for type checking purposes. And what the second line really does? It subtracts the offset of the structure’s member from its address yielding the address of the container structure. That’s it!

After you strip all the magical operators, constructs and tricks, it is that simple :-).

References

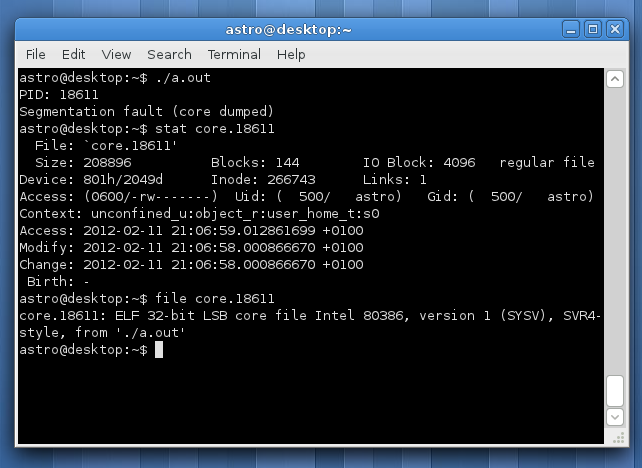

Core dumps in Fedora

This post will demonstrate a way of obtaining and examining a core dump on Fedora Linux. Core file is a snapshot of working memory of some process. Normally there’s not much use for such a thing, but when it comes to debugging software it’s more than useful. Especially for those hard-to-reproduce random bugs. When your program crashes in such a way, it might be your only source of information, since the problem doesn’t need to come up in the next million executions of your application.

The thing is, creation of core dumps is disabled by default in Fedora, which is fine since the user doesn’t want to have some magic file spawned in his home folder every time an app goes down. But we’re here to fix stuff, so how do you turn it on? Well, there’s couple of thing that might prevent the cores to appear.

1. Permissions

First, make sure that the program has writing permission for the directory it resides in. The core files are created in the directory of the executable. From my experience, core dump creation doesn’t work on programs executed from NTFS drives mounted through ntfs3g.

2. ulimit

This is the place where the core dump creation is disabled. You can see for yourself by using the ulimit command in bash:

astro@desktop:~$ ulimit -a core file size (blocks, -c) 0 data seg size (kbytes, -d) unlimited scheduling priority (-e) 0 file size (blocks, -f) unlimited pending signals (-i) 15976 max locked memory (kbytes, -l) 64 max memory size (kbytes, -m) unlimited open files (-n) 1024 pipe size (512 bytes, -p) 8 POSIX message queues (bytes, -q) 819200 real-time priority (-r) 0 stack size (kbytes, -s) 8192 cpu time (seconds, -t) unlimited max user processes (-u) 1024 virtual memory (kbytes, -v) unlimited file locks (-x) unlimited

To enable core dumps set some reasonable size for core files. I usually opt for unlimited, since disk space is not an issue for me:

ulimit -c unlimited

This setting is local only for the current shell though. To keep this settings, you need to put the above line into your ~/.bashrc or (which is cleaner) adjust the limits in /etc/security/limits.conf.

3. Ruling out ABRT

In Fedora, cores are sent to the Automatic Bug Reporting Tool — ABRT. So they can be posted to the RedHat bugzilla to the developers to analyse. The kernel is configured so that all core dumps are pipelined right to abrt. This is set in /proc/sys/kernel/core_pattern. My settings look like this

|/usr/libexec/abrt-hook-ccpp %s %c %p %u %g %t %h %e 636f726500

That means, that all core files are passed to the standard input of abrt-hook-ccpp. Change this settings simply to “core” i.e.:

core

Then the core files will be stored in the same directory as the executable and will be called core.PID.

4. Send Right Signals

Not every process termination leads to dumping core. Keep in mind, that core file will be created only if the process receives this signals:

- SIGSEGV

- SIGFPE

- SIGABRT

- SIGILL

- SIGQUIT

Example program

Here’s a series of steps to test whether your configuration is valid and the cores will appear where they should. You can use this simple program to test it:

/* Print PID and loop. */

#include <stdio.h>

#include <unistd.h>

void infinite_loop(void)

{

while(1);

}

int main(void)

{

printf("PID: %d\n", getpid());

fflush(stdout);

infinite_loop();

return 0;

}

Compile the source, run the program and send a signal like following to get a memory dump:

gcc infinite.c astro@desktop:~$ ./a.out & [1] 19233 PID: 19233 astro@desktop:~$ kill -SEGV 19233 [1]+ Segmentation fault (core dumped) ./a.out astro@desktop:~$ ls core* core.19233

Analysing Core Files

If you already have a core, you can open it using GNU Debugger (gdb). For instance, to open the core file, that was created earlier in this post and displaying a backtrace, do the following:

astro@desktop:~$ gdb a.out core.19233 GNU gdb (GDB) Fedora (7.3.1-47.fc15) Copyright (C) 2011 Free Software Foundation, Inc. Reading symbols from /home/astro/a.out...(no debugging symbols found)...done. [New LWP 19233] Core was generated by `./a.out'. Program terminated with signal 11, Segmentation fault. #0 0x08048447 in infinite_loop () Missing separate debuginfos, use: debuginfo-install glibc-2.14.1-5.i686 (gdb) bt #0 0x08048447 in infinite_loop () #1 0x0804847a in main () (gdb)

Sources

Test Driven Development

Another book from my huge TOREAD pile is Test Driven Development: By Example from Kent Beck. I learned about this method of development from the Extreme Programming book (also from Kent Beck) and I tried to take advantage of it during the coding phase of my thesis for bachelor’s. It’s a great way to develop software! Having your software covered by unit tests, you are way more confident with it. Along with this comes an assurance, that you didn’t break some part of your software when you add or change something. Without proper testing (either regression or unit) you just try stuff and see what happens. And it’s usually accompanied by glass shattering sounds and echoes of screaming people.

There is a metaphor (according to Steve McConnell in Code Complete) for software development that describes the process as drowning in tar pits with dinosaurs. I was a bit skeptical towards this metaphor at first, but it’s damn accurate when you code, but don’t test.

What exactly can you find in the book? In the first hundred pages, Mr. Beck explains test driven development on a case study of WyCash — some software that handles money. It’s a step-by-step (and by step I mean really small steps) guide through the whole process. To be honest, I didn’t like this part of the book. It explains how exactly TDD should be done, but it’s sooo annoying to read about copying methods from one place to another and replacing return 5; by return x+y;.

The second part gets a little more interesting. It’s about xUnit — the family of widely used frameworks for unit testing (sUnit for Smalltalk, jUnit for Java, CppUnit for C++ etc). In this part, you will learn how the framework works with test cases, test suits and fixtures, the setUp() and tearDown() methods etc. Kent Beck is actually the original author of sUnit, the first framework from this family, so all information you get here comes directly from the source. He actually explains how to implement such a framework using TDD method.

And the last part covers TDD method in general, answers some questions that might spring to mind, usage of design patterns together with TDD and explains some situations you might find yourself in while using test driven development method.

Red–Green-Refactor

I’d like to point out one last principle — the Red-Green-Refactor. It’s a sort of mantra that will guide you through the whole book. It explains pretty much the whole routine of TDD in three steps (but you have to read the book to understand it properly!).

- Write a test — a test for some new functionality, that will obviously fail (hence the red sign)

- Make it work — write as little code as possible to make the test execute correctly (copy some code, fake the implementation, whatever, just make it work, turn the red to green)

- Refactor — at this point, the functionality is already done, so let’s focus only on the quality of design and implementation

It’s surprisingly easy, but extremely powerful, if you think about that in broader terms. I definitely recommend this book, maybe along with the Extreme Programming from the same author.

DRY Principle

I read a couple of books on software development lately and I stumbled upon some more principles of software design that I want to talk about. And the first and probably the most important one is this:

Don’t repeat yourself.

Well, this is new … I mean as soon as any programmer learns about functions and procedures, he knows, that it is way better to split things up into smaller reusable pieces. The thing is, this principle should be used in much broader terms. As in NEVER EVER EVER repeat any information in a software project.

The long version of the DRY principle, which was authored by Andy Hunt and David Thomas states

Every piece of knowledge must have a single, unambiguous, authoritative representation within a system.

The key is, that it doesn’t apply only to the code. Every single time you have something stored in two places simultaneously, you can be almost certain, that it will cause pain at some point in the future of your project. It will sneak up on you from behind and hit you with a baseball bat. And then keep kicking you while you’re down. This is one of those cases in which foretelling future works damn well.

Authors of The Pragmatic Programmer show this on a great example with database schemes. At one point in your project you make up a database scheme, usually on a paper. Then you store it with your project somewhere in plain text or something. The people responsible for writing code will look in the file and create database scripts for creating the database and start putting various queries in the code.

What happened now? You have two definitions of the database scheme in your system. One in the text file and another as a database script. After while, the customer shows up and demands some additional functionality, that requires altering the scheme. Well, that shouldn’t be that much of a problem. You simply change the database script, alter the class that handles queries and go get some lunch. Everything works fine, but after a year or two, you might want to change the scheme a bit further. But you don’t remember a thing about the project, so you will probably want to look at the design first, to catch up. Or you hire someone new, who will look on the scheme definition. And it will be wrong.

Storing something multiple times is painful for a number of reasons. First, you have to sync changes between the representations. When you change the scheme, you have to change the design too and vice versa. That’s an extra work, right? And as soon as you forget to alter both, it’s a problem.

The solution to this particular problem is code generation. You can create the database definition and a very simple script, that will turn it into the database script. Here’s a wonderful illustration (by Bruno Oliveira) of how that works :).

Sources

Learning Ruby

I always wanted to learn Ruby. It became so popular over the last couple of years and I hear people praise the language everywhere I go. Well, time has come and I cannot postpone this anymore (not with a clear conscience anyway). So I’m finally learning Ruby. I went quickly over the net and also our campus library today, to see what resources are available for the Ruby newbies.

Resources

There is a load of good resources on the internet, so before you run into a bookstore to buy 3000 pages about Ruby, consider starting with the online books and tutorials. You can always buy something on paper later (I personally like stuff the old-fashioned way — on paper more). Here is a list of what I found:

- Programming Ruby by David Thomas and Andy Hunt — That’s right an online version of the Ruby book from the authors of The Pragmatic Programmer

- Ruby Programming WikiBook

- Ruby Learning

That would be some of the online sources for Ruby beginners. And now something on paper:

- The Ruby Way by Hal Fulton — Great, but sort-of-a big-ass book. Just don’t go for the Czech translation, it’s horrifying

- Learning Ruby by Michael Fitzgerald — A little more lightweight, recommend ford bus-size reading!

I personally read The Ruby Way at home and the Learning Ruby when I’m out somewhere. Both of them are good. These are the books that I read (because I could get them in the library). There is a pile of other titles like:

- The Ruby Programming Language

- Ruby Cookbok

- Eloquent Ruby

- Programming Ruby 1.9

- Ruby Best Practices

- Begining Ruby

Just pick your own and you can start learning :-).

Installation

Ruby is interpreted language so we will need to install the ruby interpret. On Linux it’s fairly simple, most distributions have ruby packed in their software repositories. On Fedora 15 write sudo yum install ruby. On Debian-based distros sudo apt-get install ruby. If you are Windows user, please, do yourself a favor and try Ubuntu!

To check whether the ruby interpret is available go to terminal and type

$ ruby --version

Hello Matz!

The only thing that’s missing is the famous Hello World :-)! In Ruby the code looks something like this:

#!/usr/bin/env ruby puts "Hello Matz!"

Summary

From what I saw during my yesterdays quick tour through Ruby, I can say, that it’s a very interesting language. I’d recommend anyone to give it a shot! I definitely will.

Update: Stay tuned for more! I’m working on a Ruby language cheat sheet right now.

Sources

- [1] Ruby home

- [2] Programming Ruby

The Pragmatic Programmer

Another great piece of computer literature I found in our campus’ library! I’m talking about The Pragmatic Programmer by Andy Hunt and David Thomas. And yes, it’s gooood :)!

Title of the book (in it’s Czech version) states: “How to become better programmer and create high quality software.” Right? I want that!

It’s a sort-of-a compilation of advice on software development from the practical point of view based on the experience of the authors. A lot of books come with a load of theory which is good too, but when you’re digging through the mounds of formal methods, it’s very easy to forget about the practical side of software development.

The very first chapter of talks about the career of a programmer or a software developer. The authors say to take your career choices as investments in your future. Pragmatic programmer should invest often and into a wide range of technologies. I don’t like the investment metaphor, but I like the thought. Computers train is moving fast and it will run you over at some point if you don’t jump in.

What I liked about this book the most is the emphasis on automation of routine tasks through scripting and the DRY principle. Having good knowlege of the environment and tools you work with is the key in any profession. But programmers (including myself) often tend to focus on what are we doing and on the final results rather than how we do it. And frankly, every time I stop and think what I could do better or automatically, I always find some weak spot.

The process of programming as in actually writing the code should not be overseen as trivial. You can save yourself a lot of stress by being creative in this area. The DRY principle is somewhat connected to this. If you repeat yourself, you not only work ineffectively (you’re doing stuff twice), but you also set a trap for yourself, which you intend to step into later in the project.

Overall the book is great and I definitely can recommend it. It’s something over 200 pages or so it shouldn’t take a year to read. It’s also very well written and full of jokes, which makes it fun to read!

Sources

Errors as Part of Interface

I was writing this code the other day. It’s a very small program — a POP3 client that downloads messages. And I just couldn’t come up with an easy and consistent way to report errors. I wanted something lightweight, but what actually makes sense. I was looking through some code hoping, that someone else has a good strategy I could rip. From what I saw, the most common is none whatsoever. Well, I didn’t like that one bit …

But worry no longer! Steve McConnell came to aid a coder in distress once again. I looked into my new copy of Code Complete and here’s what I found:

Throw exceptions at the right level of abstraction.

This statement has a very interesting point. The errors that can occur in your code, regardless of whether it’s a exception thrown or a status code returned, should be at the same level of abstraction of the unit, class or even routine that they happen in. For example if function called downloadAndPrintReport() exits with MALLOC_FAILED. You see, this just isn’t right. The malloc failure is the cause of the problem, it’s not the problem itself and you (or the user) cannot react appropriately. I mean, which malloc() call failed? Does it mean the report wasn’t even downloaded or it was but wasn’t printed? What the hell is malloc anyway? User doesn’t know!

Conclusion

Your error reports should be informative and useful to the receiver (which can be either a user or some parent code that deals with the error). By sticking to the current abstraction, your chances of delivering a good report rapidly grow. When downloadAndPrintReport() returns with UNABLE_TO_DOWNLOAD_REPORT, you can try to reopen the connection and try again later. In case of UNABLE_TO_PRINT_REPORT you can store it somewhere in a file instead of printing it.

Best Practices in Error Handling

According to the Murphy’s law — “Anything that can go wrong will go wrong“. And if Mr. Murphy were also a software engineer, he would certainly add “and anything that cannot go wrong will go wrong as well“. Wise man that Murphy, but what does it mean for us, the programmers out there in the trenches?

Error handling and reporting is a programming nightmare. It’s an extra work, it pollutes happy path of your code with whole bunch of weird if statements and forces you to return sets of mysterious error codes from functions. God, I hate error reporting (more than I hate New Jersey).

It might not seem very important, but it’s crucial to set some error handling strategy and stick with it through the whole project. The error reporting code will be literally everywhere. If you choose poor strategy in the beginning, all of your code will be condemned to be ugly and inconsistent even before you start writing it.

There are multiple problems, that you need to address in error reporting. The most important thing is to deliver an useful report to the user. The error message should say what happened and why it happened. A stack trace can help you find exactly what happened, but it generally won’t make the user very happy. My personal favorite format of reporting errors in terminal apps looks like this:

<program_name>: <what_happened>: <why_it_happened>

It’s inspired by GNU coreutils error reporting format. In the first section is always program name, so the user knows who is the message coming from. Second section says what happened or what did the error prevent to happen (e.g. “Cannot load configuration” or “Unable to establish remote connection”). Finally the last section informs user of what was the cause of his inconvenience, for instance “File ‘configuration.txt’ not found” or “Couldn’t resolve remote address”.

This way gives the user complete insight in what happened, yet it won’t scare him off with too much of programming detail. In fact, revealing too much about your errors (stack traces, memory dumps etc.) might be potential security risk.

Another criteria for evaluating error reporting strategy is how does it blend with the code. Generally, there are two approaches — centralized and decentralized error handling.

Centralized

Centralized way involves some sort of central database or storage of errors (usually a module or a header file) where are all the error messages stored. In code you pass an error code to some function and it does all the work for you.

A big plus of this approach is that you have everything in one place. If you want to change something in error reporting you know exactly where to go. On the contrary, everything in your software will depend on this one component. When you decide to reuse some code, you’ll need to take the error handling code with it. Also, as your program will grow number of errors will grow as well, which can result in a huge pile of code in one place that will be very vulnerable to errors (since everyone will want to edit it to add his own errors).

Decentralized

Decentralized approach to error reporting puts errors in places where they can happen. They’re part of interface of the respective modules. In C every module (sometimes even every function) would have it’s own set of error codes. In C++ a class would have a set of exceptions associated with it.

In my opinion, it’s a little harder to maintain and to keep consistent than the centralized way, but if you have the discipline to stick with it, it results in elegant and independent code. Somebody could say, that there will be a lot of duplicates of (let’s say) 5 most common errors, like IO failures and memory errors. Well, this is a problem of decentralized error reporting. You can minimize this by keeping your errors in context with the abstraction of the interface they fit in. For instance — class Socket will throw exceptions like ConnectionError or RecievingError not MallocError, FileError or even UnknownError. Malloc failure is the reason, which resulted in a problem with reading data, so from the point of Socket class it’s a reading error.

These are the two basic ways of error handling. I will write separate posts about a few concrete common strategies, that I know and find useful or at least good to know (exceptions, error codes etc.).